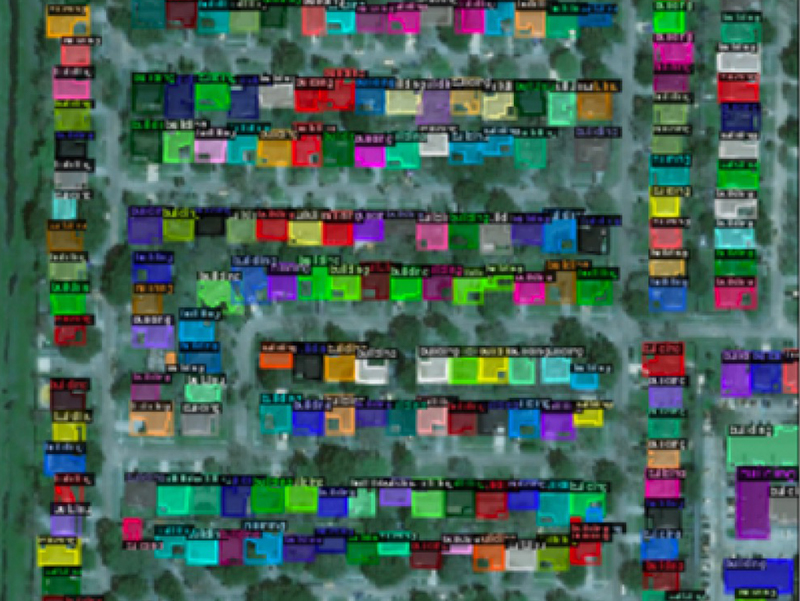

Damage Assessment

Multi-Disaster Building Damage Segmentation and Classification using Satellite Imagery

Satellite imagery has played an increasingly important role in post-disaster building damage assessment. Unfortunately, current methods still rely on manual visual interpretation, which is often time-consuming and can cause very low accuracy. In this project, we address the limitations of manual interpretation by automating the process. We create a deep learning model that combines a building identification semantic segmentation convolutional neural network (CNN) to a building damage classification CNN. This project was originally developed for Stanford’s CS325B (Data for Sustainable Development) class and the xVIew2 Challenge.